Primitive Data Types

There are seven primitive data types - String, Number, Boolean, Undefined, Null, Symbol, BigInt. So let's look at them one by one.

Number

Numbers are always so-called floating point numbers which means that they always have decimals (such as 7.7 or -2.715). Even if we don't see them or don't define them.

For example, the 20 value that we have here

is exactly like having 20.0. But they are both simply the number data type.

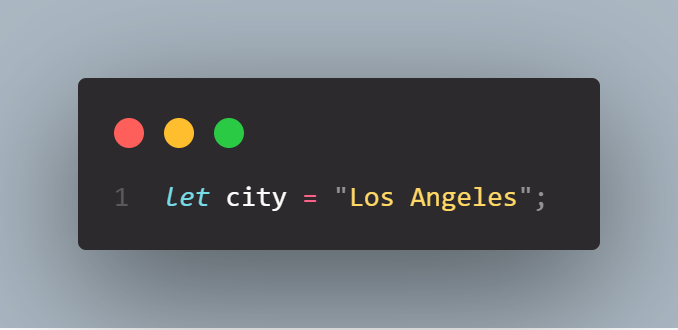

String

Strings are simply a sequence of characters. And so strings just used for text. In JavaScript, we can use either a single quote or double quote in order to indicate that the value is a string.

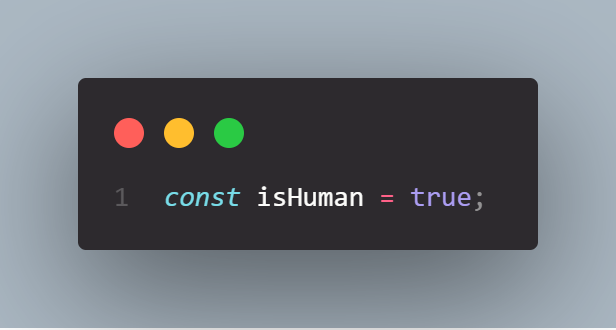

Boolean

The boolean data type is essentially a logical type that can only take one of the logical values true or false. In other words, a boolean is always either true or false. We use boolean values to take decisions with code. We can decide whether certain blocks of code or other tasks should get executed based on whether a variable is true or false.

Undefined

Undefined is the value taken by a variable that is not yet defined. Variable thats not yet defined is simply just a variable that we declared but without assigning a value.

Or in other words a variable that hasn’t been assigned a value yet (a variable that hasn’t yet been defined), holds the value undefined. We haven’t given the variable a value yet, although we have declared it.

For example like this food variable here:

Null

We can declare a variable with the value null if we deliberately don’t want it to hold any value. Null does not mean 0 or undefined: the variable deliberately points to nothing.

Symbol

A symbol is a unique and it cannot be changed (immutable) value. You can use a symbol in many different ways, but most of the time you’ll use them at places where otherwise you would use a string or a number. Strings and numbers aren’t unique themselves, so if you ever want a value to be globally unique, symbols are the way to go.

BigInt

For integers that are too large to be represented by the number type. So basically it's another type for numbers.